Note: This article originally appeared in my column for Gamasutra.

Okay folks, I’m going to nerd out a bit but bear with me. There was this show that my wife used to like watching called Star Trek: The Next Generation. In one episode Captain Picard is being held captive by the Evil Alien of the Week. Said Evil Alien twirls his space mustache, gestures to a bank of four lights, and asks Picard how many lights he sees. When Picard says “Four” Evil Alien is all like “No way, dude, there are FIVE lights,” but Picard is like “F you, buddy. There are only four lights.” Also there are painful electric shocks involved, but Picard refuses to see five lights.

Turns out that most of us is no Jean Luc Picard 1 because we’re apt to disbelieve evidence obvious to our own eyes when the conditions are right. And we don’t even need a big scary alien dude looming over us; all we need are a few strangers in the room with us saying that they totally see five lights.

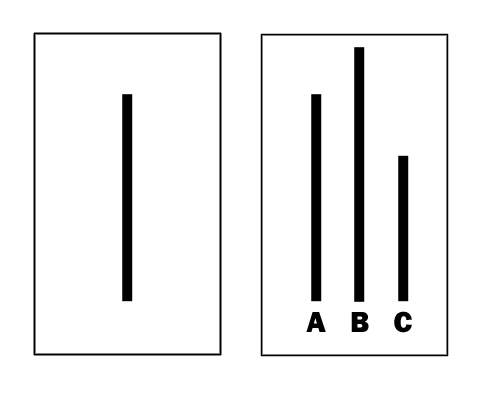

In the 1950s psychologist Soloman Asch conducted a series of experiments 2 where he gave members of a group an index card with a line drawn on it. Asch then projected a set of three different lines onto a screen and asked subjects to identify which one matched the one on the cards. All three lines on the screen were different, so it was a task so simple that anyone with two eyes and a brain behind them could get it right every time. Heck, in a pinch one eye would do. It looked kind of like this:

Which of the lines on the right matches the one on the left?

And so subjects performed admirably for the first three rounds or so. But eventually one or two subjects in the group started to immediately give answers that were obviously wrong. Like saying Line A was the longest when it was clearly the shortest. Very quickly, more and more subjects started repeating the obvious mistake, saying things that would clearly look wrong to any starship Captain.

WTF? What was going on? Well, what was going on was that only one of the subjects in the experiments was actually a subject. The rest were actors in the employ of the experimenter 3 and were purposely jumping in with obviously wrong answers just to see what the real subject would do. Turns out that in three quarters of the subjects in these experiments let their choice be influenced by the others, even when it should have been obvious that this was bananas.What’s more, in post-experiment debriefing interviews, subjects rationalized their choices by saying that their initial observations must have been wrong if everyone else was saying the opposite. They weren’t just PRETENDING to see things differently, they REALLY DID.

Turns out that when the tasks become more difficult or have less clearly defined “correct” answers, the phenomenon becomes even more accute. Asch did some follow up studies where he asked subjects questions about politics (such as what were the most critical political issues of the day) and found that he could influence people’s answers by inserting confederates into the group who asserted certain answers. Other studies have shown that bartenders or barristas can get you to tip more if they prime their tip jars with their own cash, simply because it makes you think that everyone else is tipping generously 4 These studies ties in with a lot of other things we know about human nature when it comes to conformity, submission to authority, and peer pressure. We’re often very willing to look to our peers –or even complete strangers– to define reality for us.

So what does this have to do with video games? Glad you asked. I’m sure you’ve noticed that you can’t shop on many online stores these days without being shown the ratings given to each product by other shoppers. Go shop for a new release on Amazon.com or GameStop.com and you’ll see user ratings quite prominently. Most websites that feature game reviews also have user reviews alongside their “official” ones, and file download sites like FilePlanet.com list not only download counts, but star ratings as well. See where I’m going with this? Well, keep reading anyway.

In their book Nudge: Improving Decisions About Health, Wealth, and Happiness 5 authors Richard Thaler and Cass Sunstein describe a study by sociologist Matthew Salganik and his colleagues at Princeton 6 where the researchers had over 14,000 people visit a faux music download site and browse through music by previously unknown bands before deciding which songs to download. Half the subjects were asked to pick songs based just on song name, band name, and a sample. 7 The other half had all that info, but could also see how many times the song had been downloaded. Psychologists are always pulling crap like this.

What do you think happened based on what I’ve written so far above? Well, turns out that subjects exposed to the download counts were WAY more likely to download songs that they thought others had downloaded lots, and were WAY LESS likely to try music that they thought nobody else was choosing. The quality of the song still mattered, but so did how often subjects thought the song had been downloaded by their peers. Songs that did so-so in the control group were turned into smash hits among those in the experimental group simply by displaying their download counts.

Now, I’m not accusing Amazon.com of inflating its ratings to sell more books 8. And one could argue that in the absence of such malfeasance that download counts and star ratings are real, useful pieces of information that shed some light on the true quality of a product. But nonetheless this is something to be aware of, especially with new files/games/books that haven’t yet amassed ratings or download counts. It’s also worth noting that advertisers can indirectly purchase this kind of influence by buying front-page placement or using ads to drive consumers to that content and thus increasing its popularity –or at least the number of times it was bought or downloaded. And it can work in reverse. Remember a while back when the backlash against Spore’s digital rights management measures caused a bunch of people to flood Amazon with one-star ratings? It’s still barely got one star out of 5 as of the time of this writing. The point to remember is that what you see other people doing shouldn’t always unduly influence your own actions.

That point made, though, it’s interesting to think about how game designers could use this kind of bias for the player’s benefit –at least potentially. I’m certainly not advocating that they inflate star ratings or player counts, but less sacrosanct data could be used to nudge players in certain directions that they might enjoy. For example, what if in a few months’ time you were sitting down to play through some more of the single-player campaign for Halo Reach when at the main menu there appears the message “Nine people on your friends list have tried the Halo Reach multiplayer modes within the last week. Select ‘Multiplayer’ From the main menu to join them.” Or maybe “1,943 people checked out the leaderboards in the last 5 minutes; press ‘Y’ to do the same.”

I know that the administrators of technologies like Steam, Xbox Live, and GameSpy Technology are awash in data like this and to my uneducated monkey brain it seems like it should be relatively easy to do this kind of stuff on the fly with real data. So somebody go do that and get back to me. In the meantime, I’m gonna go out and start telling strangers that it looks like rain, even though there’s not a cloud in the sky. You know, just to see what they do.

The lesson as always: people are suckers. Group think has no affect on me because of my superior and unique brain. Now, excuse me. I have to go play World of Warcraft for 12 hours or I won’t be cool.

Pingback: Super.licio.us | Superlevel

This can also explain the small variance in metacritic scores we see for major new games, right?

“Forty-three of your colleagues have given Grand Theft Auto IV a score of higher than 95. Hit ‘post’ to join them.”

Makes an interesting comment on our society and the way we value others opinions so highly. Reminds me of the Simpsons:

“50 Million Smokers Can’t be wrong!!” – Pretty much sums it up.

9/10 of my friends believe that this is not true. Now I’m stuck in a believe/not believe loop.

@ nickless

Heh. Nice, but logic has no place here.

What no Facebook “like” button? I would have so totally liked this article, especially if my friends did as well. Baaaaaa.

Sheep mentality can benefit just about anyone who deploys sheep-crack like FB Like. Not just games.

Well, it’s important to keep in mind the rational decisions made by those who see the download count – If you’re presented to unknown bands or products in general and your money is to be spent – It’s a more secure choice to go with what a majority finds interesting instead of that band noone even downloaded nor have you heard off which might as well be one guy with a bucket going

“*bonk bonk bonk* Oy wey the bells are calling for another slice *bonk bonk bonk* How many pants? *bonk* I have heard of tuna *bonk bonk*”

While some might argue the implications of how to abuse this, it’s not exactly rocket science (It’s in fact, psychology).

We’re social animals with a group mentality.

And the most fascinating part of that is those who frown upon it and use expressions such as “Sheep mentality”, which in itself, is a term used among a certain group of people whom, I personally, can only see as black sheep. Sheep none the less.

However as long as these data are used without modification, it’s a very valid and useful tool in our every day life. If for no other reason it does help our stores avoid buying Schnupfels in favor of bread. For example.

I do wish they’d start stocking stroopwafers tho…

As for the usage in design – We already have it in full effect.

Steam will show you directly what your friends are playing,

but only if it’s a steam game.

I do, however, not see an implication inside games in any major way. Not that would cause any issue. Yes, 9 friends played in multi. That won’t change anything, even if they’re playing it right now *and* I choose to play multiplay instead of single. I’ve already paid for the product, which is the main reason for the product to be on the market to begin with.

The only real usage would be in such areas as iPhone Apps having In App Purchases and using these things to motivate buying whatever new map, hat or leghair-bleach the rest are buying.

As a final note, 8 out of 10 dentists, every 3rd woman and many scientists agree – This post is insightful and interesting.

@M.E I am intrigued by your bucket music. Where can I purchase it? I too have heard of tuna.

I just attended a seminar that went into detail of why Amazon is da bomb-diddly of online-retailers. The rating system is one of the main reasons. But you know what? I USED to just go by the number of stars something got. Then I got smart, and now I read a number of the reviews (positive and negative) before I drop down any cash.

So, while it might seem tempting (almost a gimme) that retailers would game the ratings system to make more sales, Amazon most assuredly doesn’t. Since each rating comes with explanatory text, and the “most helpful” ratings float to the top, it’s a waste of their time to try to put fake stuff in there. Especially when, as the speaker told us, they receive 18 MILLION customers every day. It’s not like they’re hurting for sales.

It’s kind of a feedback loop: Amazon creates a rating system that’s self-curating, so people put more trust in the ratings, and since they trust Amazon, they buy more stuff from there. And therefore more ratings are submitted and curated (“Did you find this review helpful? Yes/No”) and the star ratings become more reliable. So more people come to the site because they’re more confident that they won’t waste their money.

As for M.E’s comment on the in-game notifications of multi-player: while this might not be useful to you if you’re focused on single-player, it might bring a feature to your attention that you didn’t know about, or reveal opportunities for play that you didn’t know existed. But I don’t see that information as a trick to get you to play more multiplayer. Unless you have to plunk down extra money to play with your friends. So you pay the money, some other friends see that you paid it, so they pay it too. So yeah, I guess that fits here.

Seth

It seems to me that the Asch experiment is a demonstration of normative social influence while the song download count experiment might be interpreted as an example of informational social influence. The Shafir study about the auto-kinetic effect might be a better match for this story, or perhaps both.

Because people still have knee-jerk reactions to how we can be such “dumb conformists” upon hearing about normative influence, it would be instructive to talk about both kinds of influence in an article like this so that people can appreciate the benefits of conformity as well.

Just wanted to offer my suggestions. Great site!

Thanks for the comments! Glad you like the site.